If you believed the loudest takes on the internet, AI has already fired us all, stolen our jobs, and is now typing this article while we cry into our keyboards. Somewhere between “ChatGPT will replace humanity” and “my toaster is sentient,” the conversation about AI turned into pure science fiction.

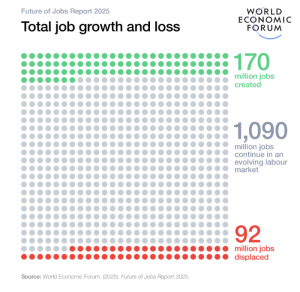

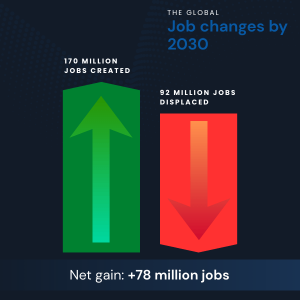

We offer you to take the less cinematic version. According to the World Economic Forum, global labor markets are not collapsing. By 2030, technology-driven shifts are expected to create about 170 million new jobs while displacing around 92 million, resulting in a net gain of roughly 78 million roles. That doesn’t look like an extinction event but like chaos with paperwork. In the United States, Goldman Sachs estimates that only about 6–7% of jobs face displacement in baseline AI adoption scenarios. That’s disruption, but by no means devastation. Therefore, your job is more likely to be reorganized by automation.

At this point, we should turn to trusted sources and insights. If AI isn’t taking over work, the uncomfortable question is: what exactly is it destabilizing instead? Source: World Economic Forum

Source: World Economic Forum

What AI Is Actually Doing to Jobs (According to the Data)

The idea that AI will wipe out work entirely echoes the “Luddite Fallacy” – the long-standing fear that new technology inevitably causes permanent mass unemployment, as the Guardian states. Of course, we can already tell that history suggests otherwise. While automation has removed certain tasks, it has rarely eliminated entire professions and has taken over some specialized fields. Over the past 60 years, only one widely recognized occupation (we’re talking about elevator operators here) has disappeared completely.

Job Disruption vs Job Destruction

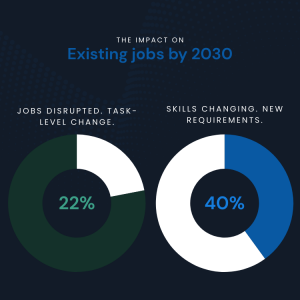

One of the biggest mistakes in the AI jobs debate is treating “disruption” as a polite synonym for “everyone gets fired.” The data does not support that interpretation, even a little. According to the World Economic Forum, about 22% of global jobs are expected to be disrupted by 2030. Disrupted does not mean deleted. It implies altered, reshaped, or partially reassembled. Most of this disruption happens at the task level. Jobs are being reconfigured internally as AI takes over specific activities while leaving the role itself intact. Tasks get automated, merged, elevated, or pushed elsewhere. Someone still has the job title, but the work inside it looks very different from what it did five years ago.

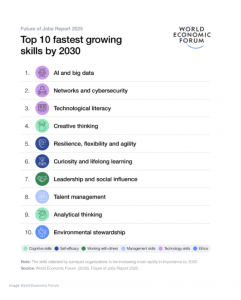

The same report shows that nearly 40% of skills required in existing jobs will change by 2030. That is more of a reskilling statistic. While the role survives, the skillset does not. This is why so many people feel unsettled even when employment numbers look fine. The Hill claims 63% of employers say skill scarcity prevents them from preparing for future changes. Demand is growing fastest for AI, big data, and cybersecurity skills, but human abilities like critical thinking, resilience, leadership, and teamwork remain just as important.

Source: World Economic Forum

Automation Potential Is Not a Layoff Forecast

One statistic gets quoted endlessly whenever the AI panic needs fresh oxygen: around 57% of US work hours are technically automatable with existing technology. That figure comes from McKinsey, and it is accurate, yet also routinely misunderstood. “Technically automatable” does not mean “about to disappear.” In fact, in a lab, with perfect conditions, unlimited budgets, zero regulation, and very patient lawyers, today’s technology could replicate parts of that work. Needless to say, real economies operate in offices, factories, hospitals, and government systems that still run on software old enough to vote.

Major technologies, from electricity to industrial robotics to cloud computing, took decades to move from technical feasibility to widespread adoption. AI is not exempt from that rule, despite how impressive the demos look on X. According to Goldman Sachs, only about 9.3% of US companies have used generative AI in production workflows recently.

This has nothing to do with pilots, experiments, or “we tried ChatGPT once”; it means real operational use. Most headlines ignore the fact that capability is not equal to adoption, and adoption is not displacement. AI can theoretically do a lot; whether organizations trust it, afford it, regulate it, and redesign work around it is a much slower story.

The Shift No One Tracks: Task Erosion Inside “Safe” Jobs

A common reason the AI debate feels so confusing is that many people look at job titles to understand what’s happening.

Tasks Are Disappearing Faster Than Jobs

The data looks somewhere else entirely. Research aligned with the International Monetary Fund and the OECD suggests that around 60% of jobs in advanced economies will experience significant task-level change. Once again, the work inside the role shifts, quietly and unevenly.

At the same time, the same research by the National University estimates that about 66% of tasks in 2030 will still require human skills or direct human–AI collaboration. Humans are not being removed from the loop, but the loop itself is being renovated and streamlined.

A great example of the task-level elaboration is the latest Claude AI, which seamlessly integrates with Google Sheets. It can manage data analysis and automate repetitive tasks that were previously handled by human specialists. While it can significantly boost workflow efficiency, it raises questions about how entry-level roles that perform similar tasks may change as AI systems like Claude take on more responsibilities.

What is disappearing are the tasks that once made roles coherent learning environments. According to McKinsey, demand in job postings is already declining for activities like writing, research, summarization, and basic analysis. These were the tasks that taught people how to think before they were expected to decide.

The Entry-Level Collapse: The Most Dangerous Long-Term Risk

The technology follows a very predictable pattern: junior work goes first to be robotized. Processes that are repetitive, template-driven, and historically assigned to early-career employees are exactly the tasks AI systems handle best.

According to the analysis cited by Bloomberg, AI can already optimize around 53% of the tasks performed by market research analysts and roughly 67% of the tasks handled by sales representatives. When those roles are streamlined, the automation doesn’t hit senior judgment or relationship-building – it mostly hits the junior layer: data gathering, first-pass analysis, outreach, follow-ups, reporting. The work people used to do before they were trusted with decisions.

When these task-level shifts are aggregated across industries, estimates suggest that nearly 50 million US jobs could be impacted at the entry level over the coming years, based on combined projections from Medium.

This matters because entry-level jobs were never meant to be fully efficient. They exist so people who have entered the practice within the specific domain can learn, make mistakes, and build real experience over time. That’s how beginners develop judgment and understand how work actually functions. If companies remove these roles too fast, they remove the stepping stones that help people grow into experts.

Youth Impact Is Already Visible

This problem is already showing up in the numbers, and it’s hitting younger workers first. According to Goldman Sachs, unemployment among 20–30-year-olds in tech-exposed occupations has risen by roughly three percentage points since early 2025. That increase is considerably higher than for older workers in the same fields and higher than the broader labor market trend. In other words, when AI-related efficiency gains slow hiring, juniors feel it before anyone else does.

Surveys cited across World Economic Forum research show that about 49% of Gen Z job seekers believe AI has already reduced the value of their degree in the labor market. That belief is not rooted in pessimism alone. Entry-level hiring is declining, while job descriptions quietly demand broader skill sets, AI fluency, and “strategic thinking” from candidates with minimal real-world experience.

This creates a structural risk that doesn’t show up in quarterly employment reports: fewer juniors are being hired, those who are hired are expected to perform at a higher level immediately, and fewer organizations are willing to invest in long ramp-up periods. The long-term consequence is simple and uncomfortable: no juniors today means no seniors tomorrow. Apparently, careers do not skip steps just because technology has gotten better.

Skills Gap: The Real Crisis Hiding Behind AI Headlines

AI may grab attention, but the real issue is the widening skills gap behind the scenes. In practice, about 59% of the global workforce will need reskilling or upskilling by 2030 just to keep up with how jobs are changing. That is more than half of everyone currently working. More worrying is what happens next. Roughly 11% of workers are unlikely to receive that reskilling at all. Not because they refuse, but because systems are slow, training is expensive, and access is uneven. When you scale that gap globally, it translates into more than 120 million people at medium-term risk of redundancy, not because their jobs vanished, but because their skills stopped matching what their roles quietly became.

Employers see this tension clearly. About 63% now cite skills gaps as the single biggest barrier to business transformation. The technology is moving faster than people can realistically adapt, and the real crisis is not automation but a mismatch.

Why “Upskill” Is Not Enough

“Just upskill” has become the corporate equivalent of “just calm down.” It sounds reasonable, supportive, and deeply insufficient once you look at the timing. According to Beamery, about 77% of employers say they plan to upskill their workforce in response to AI-driven change. In practice, it collides with another number from the same data set: 41% of employers also plan to reduce their workforce, where AI can automate tasks.

This is absolutely a timing problem because reskilling takes years. It requires structured programs, real projects, mentorship, and the psychological safety to be bad at something before getting good at it. Automation decisions, on the other hand, happen in quarters.

The result is a widening gap between intention and outcome. Employers want more capable, adaptable workers, but they adopt tools faster than people can realistically adjust. Workers are told to reskill while simultaneously watching roles disappear, teams shrink, and expectations rise. The mismatch is not about motivation or willingness. It’s about speed. Technology compounds quickly. Human adaptation does not. And no amount of motivational LinkedIn posts can compress that timeline.

Creative Industries: The Early Warning System

If you want to see AI’s impact without speculation, look at creative industries. They are not a prediction market. They are a live experiment already running. According to data from the Association of Photographers in the UK, around 58% of photographers have already lost work directly to generative AI. This is the present tense.

The financial impact is equally blunt. The average reported income loss sits around £35,000 per year, and reported losses increased by roughly 142% year over year. Based on current trends, industry groups warn that the UK’s £2.4 billion photography sector could be hollowed out within five years if no structural changes occur.

What’s Being Destroyed Isn’t “Creativity”

Despite the rhetoric, creativity itself is not disappearing. What is disappearing is the economic middle that sustains creative careers. Entry- and mid-level roles are being hit first, while established names with strong brands, reputations, or niche expertise remain relatively protected. This pattern shows up consistently across photography, illustration, music, and writing.

There is also a behavioral signal that rarely gets discussed. Surveys show a 46% reduction in publicly shared creative work, driven by fears that content will be scraped for training data without consent or compensation. Fewer people are sharing. Fewer people are experimenting in public. That has long-term consequences for talent discovery and skill development.

The scale mismatch makes the risk more evident. The UK creative sector employs roughly 2.4 million people. The UK AI sector employs around 86,000 people. Even if AI creates new roles, the numbers do not line up neatly. Therefore, top performers keep their positions, while beginners find it harder to get in, and without clear action, the path that trains and supports the next generation slowly disappears.

Productivity Gains vs Human Costs

This is the part of the AI story that executives love, economists respect, and PowerPoint decks aggressively highlight in bold. According to Goldman Sachs, widespread adoption of generative AI could raise labor productivity by roughly 15% in developed economies over time. That is a massive number. Productivity gains of that size usually define entire economic eras, not quarterly reports.

Recent estimates from McKinsey & Company suggest generative AI could generate between $2.6 trillion and $4.4 trillion in annual economic value across 63 analyzed use cases. To put that into perspective, that’s roughly comparable to the entire 2021 GDP of the United Kingdom, which stood at about $3.1 trillion.

From a systems perspective, this is real progress. Higher productivity means more output with the same or fewer inputs, which in theory creates room for higher wages, lower prices, new industries, and better use of human effort. The issue is not the upside itself, but how we manage the transition to get there.

Inequality Amplification: Who Bears the Risk

AI does not land on the workforce evenly, and gender is one of the clearest fault lines. As per the National University, in the United States, about 79% of employed women work in roles classified as high risk for automation, compared with roughly 58% of men. Women are disproportionately represented in administrative, clerical, support, and service roles, precisely the categories where task automation arrives first.

The imbalance becomes more evident in high-income countries. As the European Centre for Women and Technology states, about 9.6% of women’s jobs fall into the highest-risk category for AI-driven disruption, compared with just 3.2% of men’s jobs. These differences tend to compound in the long run, especially when reskilling opportunities, leadership pipelines, and access to AI-creating roles remain uneven.

What makes this even more risky is that many of the roles most exposed to automation, such as administrative assistants, customer service representatives, retail cashiers, bookkeeping clerks, call center agents, and data entry staff, have historically provided stable income, flexible hours, and accessible entry or re-entry points into the workforce.

Source: World Economic Forum

Geographic and Class Effects

The same unevenness appears across regions and income levels. Research from the National University suggests that AI could affect around 60% of jobs in advanced economies, while only about 26% of jobs in low-income countries face similar exposure. This essentially means the nature of risk is different.

In wealthier economies, a large share of work is cognitive, digital, and white-collar, exactly where AI performs best. At the same time, offshoring combined with AI-driven efficiency increases competition for those roles. A task that can be automated can often also be relocated. As an outcome, these forces compress wages, narrow entry points, and intensify competition at the lower and middle tiers of white-collar work.

AI does rearrange inequality, often putting pressure on groups and regions that already have fewer buffers. And because this redistribution happens gradually, through hiring patterns and task changes rather than mass layoffs, it is easy to miss until the effects are already locked in.

What We Should Actually Be Afraid Of (With Data)

The real danger in the AI transition is the slow erosion of the structures that made careers sustainable in the first place.

The Risks Hiding in Plain Sight

With roughly 59% of the global workforce needing reskilling by 2030 and a meaningful share unlikely to receive it, the labor market risks splitting into two groups: those who can seamlessly adapt and those who slowly fall out of alignment.

There is also a growing oversight problem. As AI systems generate more outputs, humans are increasingly asked to supervise, validate, and approve decisions they were never trained to produce themselves. When junior learning tasks disappear, judgment is expected without foundation. Errors become harder to catch, accountability becomes blurrier, and responsibility concentrates upward.

What the Data Does Not Support

What the data consistently fails to show is the scenario dominating popular imagination. There is no credible evidence pointing to sudden mass unemployment driven by AI. There is no sign of total job collapse. There are no fully automated professions operating end-to-end in real-world conditions.

Across studies from all the sources mentioned in this article, we can clearly see that jobs change, tasks shift, and roles are redesigned. But humans remain in the loop, often more tightly than before. The fear, then, is misplaced. AI is not removing employees from work but removing the buffers, ladders, and training grounds that once helped people grow into it.

Conclusion: AI Isn’t Taking Over the World. We’re Just Ignoring the Right Risks

If you strip away the hype, the panic, and the oddly confident LinkedIn prophets, the data tells a surprisingly calm story. Net job growth remains positive. According to the World Economic Forum, more jobs are expected to be created than displaced over the next decade. Human skills are, in fact, becoming more valuable precisely because machines now handle the obvious parts.

What is truly dangerous is not machine dominance. Careers are being reshaped faster than institutions can adapt. Entry-level roles are thinning out. Skills are fragmenting. Learning paths are breaking. And oversight responsibilities are being pushed onto people who were never given time to develop judgment the old way.

AI does not make humans obsolete. It makes weak systems visible. It exposes outdated education models, brittle career ladders, and organizations that optimized for short-term efficiency without thinking about long-term capability. Therefore, we can either redesign how people grow, learn, and advance in an AI-shaped economy, or we can keep arguing about robot takeovers while the foundations quietly erode.

FAQ

Will AI take most jobs in the next decade?

No credible data supports that scenario. Research from institutions like the World Economic Forum and Goldman Sachs consistently shows task-level disruption rather than mass job elimination. Jobs change faster than they disappear, and most roles retain a human core even as tasks shift.

Why does it still feel like AI is everywhere and jobs are disappearing?

Because task erosion is invisible. When writing, research, or analysis quietly vanish from roles, people feel displaced even if their job title remains. Hiring slows, expectations rise, and learning opportunities shrink. That discomfort shows up before unemployment numbers do.

Are creative jobs uniquely vulnerable?

Creative industries are not uniquely vulnerable, but they are early indicators. They show what happens when AI hits pricing, volume, and entry-level work before regulation, licensing, or new economic models catch up. The same pattern is likely to repeat elsewhere.

Is reskilling the solution?

Reskilling is necessary but not sufficient. Training takes years, while automation decisions happen quickly. Without protected entry points, mentoring, and real work-based learning, “upskill” becomes an empty instruction rather than a viable pathway.

What skills actually matter going forward?

Judgment, synthesis, domain expertise, collaboration, and the ability to work with AI rather than around it. Tools will change. These skills endure. The challenge is ensuring people have a way to develop them before being asked to perform at senior levels.

Should individuals be afraid of AI?

Fear is not very useful here, but awareness is. AI is a set of choices made by organizations, governments, and designers. The real question is whether those choices will include sustainable human pathways or quietly remove them.